Healthcare leaders no longer control the first touchpoint in the patient journey.

Increasingly, that role belongs to consumer AI.

In a recent HIMSS Central & Southern Ohio Chapter panel, leaders across clinical, informatics, and digital strategy explored a hard truth: patients are already using generative AI to interpret symptoms, review lab results, and decide when (or whether) to seek care. Health systems aren’t introducing AI into the journey. They’re entering a journey that’s already begun.

For organizations like Advizex, the question isn’t whether AI will shape patient behavior. It’s how health systems respond in a way that leverages technology to strengthen trust rather than erode it.

The Post-CURES Reality: Information Without Understanding

Since the 21st Century Cures Act expanded patient access to clinical data, individuals now receive lab results, imaging reports, and clinician notes in near real time. Transparency increased. Comprehension did not.

As Dr. Abi Omoloja, Chief Medical Informatics Officer at Dayton Children's Hospital, noted, patients are flooded with data written in medical language that assumes years of training. The gap between access and understanding is where consumer AI has stepped in. Patients use it to translate jargon, summarize visits, and connect dots across multiple reports. AI has become an on-demand interpreter for modern healthcare.

This is not a fringe behavior. Dr. Omoloja now routinely asks, “What did ChatGPT tell you?” as part of the intake conversation.

“ChatGPT Is Now Your Digital Front Door”

Dan Thelen of Nordic Global captured the shift bluntly: consumer AI tools have effectively become healthcare’s digital front door. Whether health systems endorse it or not, patients are forming impressions, expectations, and even anxieties before ever logging into a portal or scheduling an appointment.

That changes the stakes:

• Patients arrive more informed — or misinformed

• Clinical visits increasingly include AI-generated hypotheses

• Trust now hinges on how providers respond, not whether AI is used

Forward-looking systems are responding by guiding patients on how to use AI safely, not telling them to avoid it.

Consumer AI vs. Clinical AI: Context, Control, Consequence

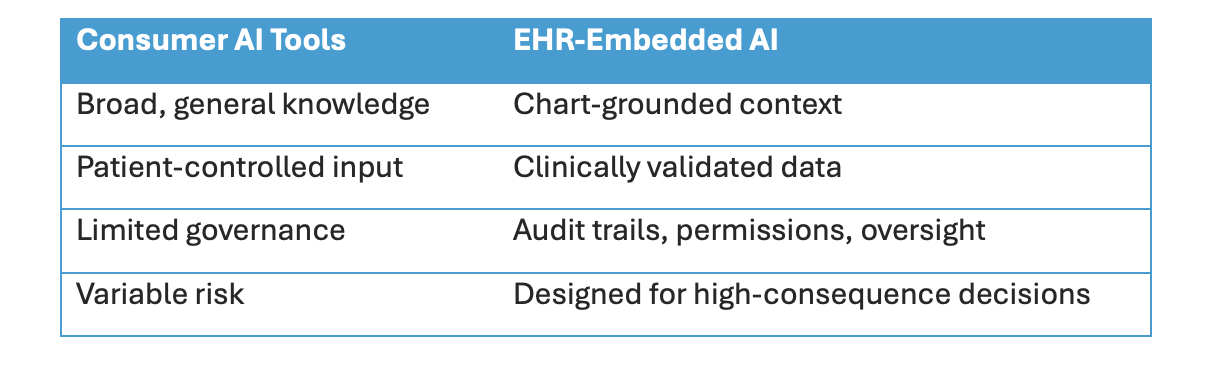

A central theme of the discussion was the difference between public generative AI and AI embedded in clinical systems.

The takeaway: high-risk, high-consequence decisions belong inside governed, clinical systems. Low-risk interpretation and preparation can occur outside, but only with transparency and education.

Trust Is Still the Currency

AI didn’t change healthcare’s core dynamic: trust between patients and clinicians.

Panelists emphasized that AI can either strengthen that relationship or strain it:

Positive impact

• AI scribes free clinicians for more face-to-face time

• Patients arrive better prepared and less anxious

• Educational support continues beyond the visit

Risks

• Overconfidence in AI suggestions

• Confusion about what AI vs. humans are doing

• Visible AI errors outweighing many quiet successes

Patients increasingly judge experiences based on how technology supports or distracts from human care. Transparency about AI’s role is no longer optional.

Why Healthcare AI Efforts Lose Momentum

When AI initiatives fail, it’s rarely the model. It’s everything around it:

• Promises exceed frontline experience

• Tools add clicks instead of saving time

• No clear owner in production

• No metric that proves value to clinicians

• Governance slows decisions more than pilots

As one panelist summarized: if everyone owns AI, no one owns it. Sustainable adoption requires named ownership, real workflow integration, and measurable frontline benefit.

What Health Systems Should Do Now

The panel’s advice was consistent and practical:

• Be strategic, not reactive. Start with the problem you’re solving — not the technology.

• Segment patients like consumers. Different populations need different AI support models.

• Bring patients to the table. Patient and family advisory councils are essential for evaluating new AI tools before rollout.

• Guide AI use, don’t ignore it. Provide safe-use guidance, especially around PHI and clinical decisions.

• Build capability before platforms. Test, learn, refine governance, then scale.

The Bottom Line

Patients aren’t waiting for healthcare to “figure out AI.” They’re already using it to make decisions.

Health systems that acknowledge this reality and position themselves as trusted guides in an AI-enabled world will deepen relationships. Those that dismiss it risk losing relevance at the very start of the patient journey.

Consumer AI may be the new digital front door. Trust still decides who patients let inside.